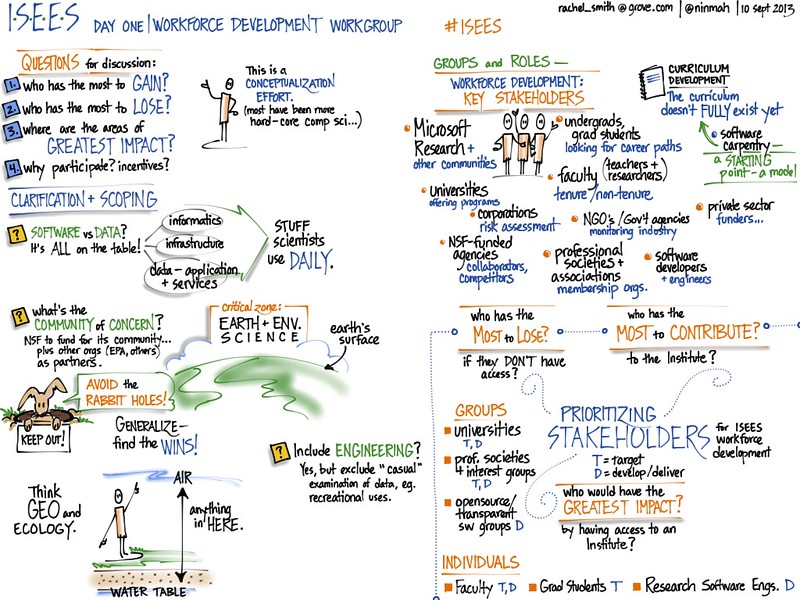

Education / Workforce Development Group

Cannot do grand synthesis opaquely and be science. must be able to scale and evolve.

How did data succeed? What features really made the difference and what were just sideshows?

- Sticks (Funder and journal mandates?)

- Working groups (NCEAS)

- Training

- external forces: there is more data.

Why one center? Why not 15 centers? Divided by discipline rather than region…. synthesize the synthesis centers

(on the ground risk assessment. the huge outside-of-the-academy representation).

Computational training - math stats or computer science? Both.

- Grassroots

- Infrastructure

- Killer app examples

(Relatively) easy to just do it and do it wrong. Grand challenge synthesis – just plug different models and different data together in a terrible way.

So what? step 1 is to try. step 2 is to iterate. must be able to do step 2.

Papers don’t scale. What was once a robust exchange is largely talking past each other. We look at different data,

Levels of software

- stats

- one off models

- custom analytics

- modeling frameowrks

- community models

- workflows

- compute engines

- data management

- services (blast)

The ‘odd’ model framework for individual based models. Hard to define/describe a model when it cannot be consisely stated in mathematics.

see Polhill et al 2008

Stakeholders

- who has the most to lose The student. Perhaps the tax payer

- who would have most impact (on training?) Administrators (e.g. university level). Funder. Reviewer.

- who has most to contribute Researcher-developer (author).

Understanding current skills and practice: https://doi.org/10.1525/bio.2012.62.12.8

Work involving computation is often considered interdisciplinary. We don’t

Literacy

Role of institutional instruction vs the software center.

Teaching directly vs supporting training.

Understanding, development, and publication of community standards. Something for reviewers of papers, grants, departments to point to

Day 1 Wrapup

Organization and Governance

- Stakeholders: Students, Educators, Researchers, Professional societies & Institutions, Funders, Data Repositories, Software Community

- Client: Researchers.

- Where to engage

- Values: Results-oriented transparent collaborative community-drive, useful

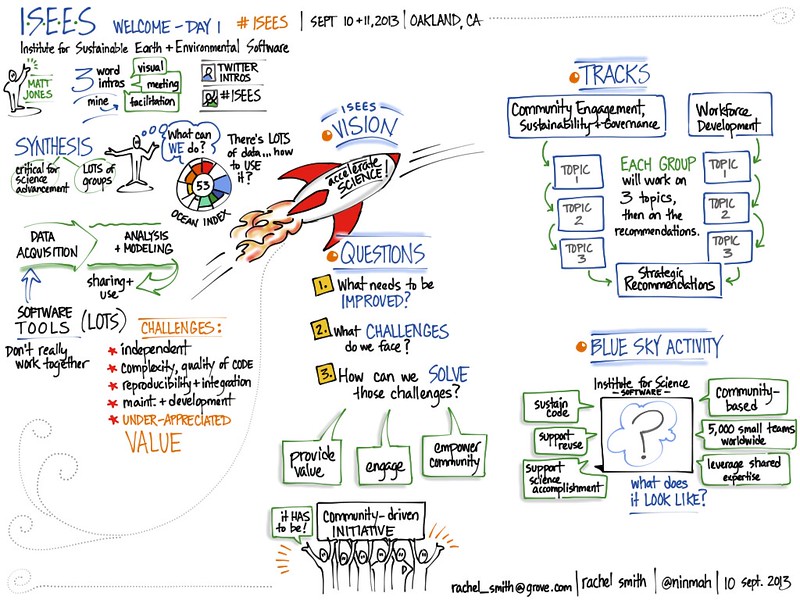

Rachel Smith’s Visual notes